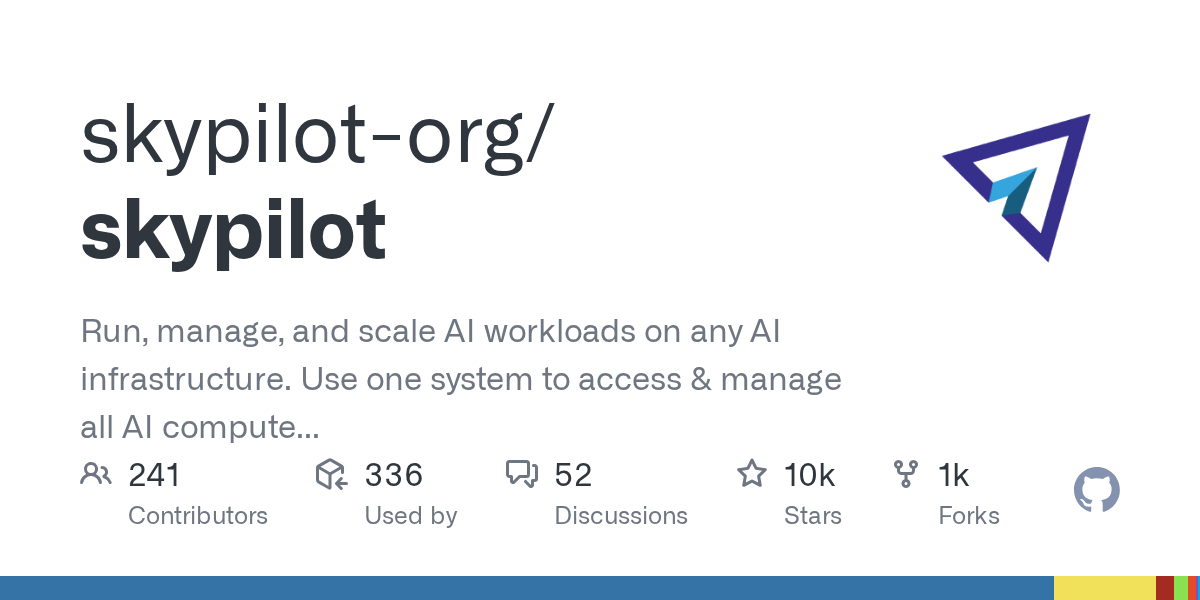

📊 Stats & Trend

| ⭐ Stars | 9,675 |

| 📈 Weekly Growth | +9,675 |

| 🔥 Today Growth | +9,675 |

| 📈 Trend | Trending |

| 📊 Trend Score | 7740 |

| 💻 Stack | Python |

Overview

SkyPilot has gained significant traction with 9,675 stars, positioning itself as a unified platform for AI workload management across diverse infrastructure environments. The tool addresses the growing complexity of managing AI compute resources by providing a single interface to orchestrate workloads across Kubernetes, Slurm clusters, over 20 cloud providers, and on-premises systems.

Key Features

• Unified interface for managing AI workloads across Kubernetes, Slurm, and 20+ cloud providers

• Multi-cloud orchestration allowing seamless workload distribution and migration

• On-premises integration for hybrid cloud-to-edge AI deployment scenarios

• Infrastructure abstraction layer that simplifies resource provisioning and scaling

• Python-based implementation providing programmatic control over AI compute resources

• Centralized management system for tracking and coordinating distributed AI jobs

Use Cases

• ML teams needing to distribute training jobs across multiple cloud providers for cost optimization

• Research institutions managing AI workloads across on-premises clusters and public cloud resources

• Enterprises implementing hybrid AI infrastructure strategies combining private and public compute

• DevOps teams standardizing AI deployment pipelines across heterogeneous infrastructure environments

• Organizations seeking to avoid vendor lock-in while maintaining flexibility in AI compute choices

Why It’s Trending

This tool gained +9,675 stars this week, showing strong momentum in AI Infrastructure. This suggests increasing developer interest in unified infrastructure management approaches as AI workloads become more complex and distributed. This trend may reflect a broader shift toward infrastructure abstraction solutions that can handle the growing diversity of AI compute environments.

Pros

• Eliminates infrastructure vendor lock-in by supporting multiple cloud and on-premises environments

• Reduces operational complexity through unified management interface

• Provides flexibility for cost optimization across different compute providers

• Python-based architecture integrates well with existing ML development workflows

Cons

• Additional abstraction layer may introduce complexity for simple single-cloud deployments

• Potential learning curve for teams already established with provider-specific tools

• Dependency management across multiple infrastructure types could create debugging challenges

Pricing

Open source and free to use. Users pay only for the underlying infrastructure resources consumed.

Getting Started

Install via Python package manager and configure credentials for target infrastructure providers. The platform provides command-line and programmatic interfaces for immediate workload deployment.

Insight

The rapid adoption suggests that AI teams are increasingly facing infrastructure fragmentation challenges as workloads scale beyond single-provider environments. This growth pattern indicates that the market may be shifting toward infrastructure-agnostic solutions as organizations seek greater flexibility in AI compute strategies. The timing of this momentum can be attributed to the growing complexity of AI workloads requiring diverse compute resources and the rising costs of cloud vendor lock-in scenarios.

Comments