ACTION · ENTRY · AVOID · WHY THIS WORKS

Build a focused solution targeting the gap in this space

Start with the minimal viable version that solves one problem well

Do not build generic tooling — niche wins every time

Unlock the full build strategy

Get ACTION, ENTRY point, AVOID and WHY THIS WORKS for every opportunity.

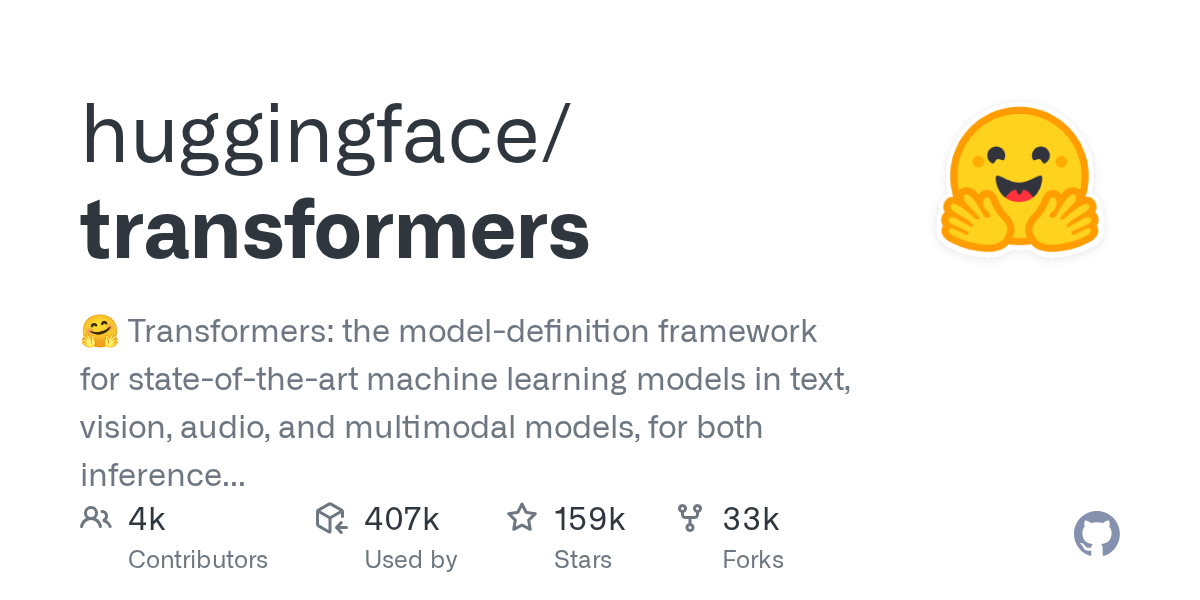

📊 Stats & Trend

| ⭐ Stars (total) | 158,794 |

| 📈 Star Growth (Mar 29 → Apr 05) | +158,794 |

| 🔥 Star Growth (Apr 04 → Apr 05) | +158,794 |

| 🔥 Trend | Exploding |

| 📊 Trend Score | 127035 |

| 💻 Stack | Python |

Overview

Hugging Face’s Transformers library has gained explosive momentum with +158,794 stars this week, establishing itself as the dominant framework for state-of-the-art machine learning models. This Python-based toolkit provides unified access to transformer models across text, vision, audio, and multimodal applications, supporting both inference and training workflows.

Key Features

• Pre-trained model hub with thousands of state-of-the-art models from leading research organizations

• Unified API for text processing (BERT, GPT), computer vision (ViT, CLIP), and audio processing models

• Seamless integration with PyTorch, TensorFlow, and JAX frameworks

• Built-in tokenizers, data loaders, and preprocessing utilities for multiple data types

• Production-ready inference pipelines with automatic hardware optimization

• Fine-tuning capabilities with trainer classes and distributed training support

Use Cases

• Natural language processing applications like sentiment analysis, question answering, and text generation

• Computer vision tasks including image classification, object detection, and image-to-text generation

• Multimodal AI applications combining text and images for content understanding

• Research prototyping for testing new transformer architectures and training approaches

• Production deployment of AI models with standardized inference pipelines

Why It’s Trending

This tool gained +158,794 stars this week, showing strong momentum in AI Infrastructure. This suggests increasing developer interest in accessible, production-ready transformer model deployment. This trend may reflect a broader shift in how teams are building with AI, moving from custom implementations toward standardized, battle-tested frameworks that accelerate development cycles.

Pros

• Comprehensive model ecosystem with consistent APIs across different AI domains

• Strong community support and extensive documentation from Hugging Face

• Production-ready with optimizations for inference speed and memory efficiency

• Regular updates incorporating latest research breakthroughs and model releases

Cons

• Large dependency footprint can increase project complexity and deployment size

• Memory requirements for larger models may limit usage on resource-constrained environments

• Rapid API evolution can require frequent code updates to maintain compatibility

Pricing

Open source and free to use. Hugging Face offers paid cloud services for model hosting and inference, but the core Transformers library requires no licensing fees.

Getting Started

Install via pip and load pre-trained models with just a few lines of code. The pipeline API provides immediate access to common tasks without requiring deep ML expertise.

Insight

The explosive growth suggests that developers are consolidating around standardized AI tooling rather than building custom transformer implementations. This momentum indicates that the AI development ecosystem is likely driven by demand for faster time-to-market and reliable model performance. The trend may reflect a maturation phase where teams prioritize proven frameworks over experimental approaches, which can be attributed to increasing enterprise adoption of transformer-based AI applications.

Comments